How to generate images using AI?

AI advances now empower anyone to create gorgeous art and images. It has made it possible to generate a wide range of images that are difficult or even impossible for humans to create manually. Let's look at two popular methods of image generation:

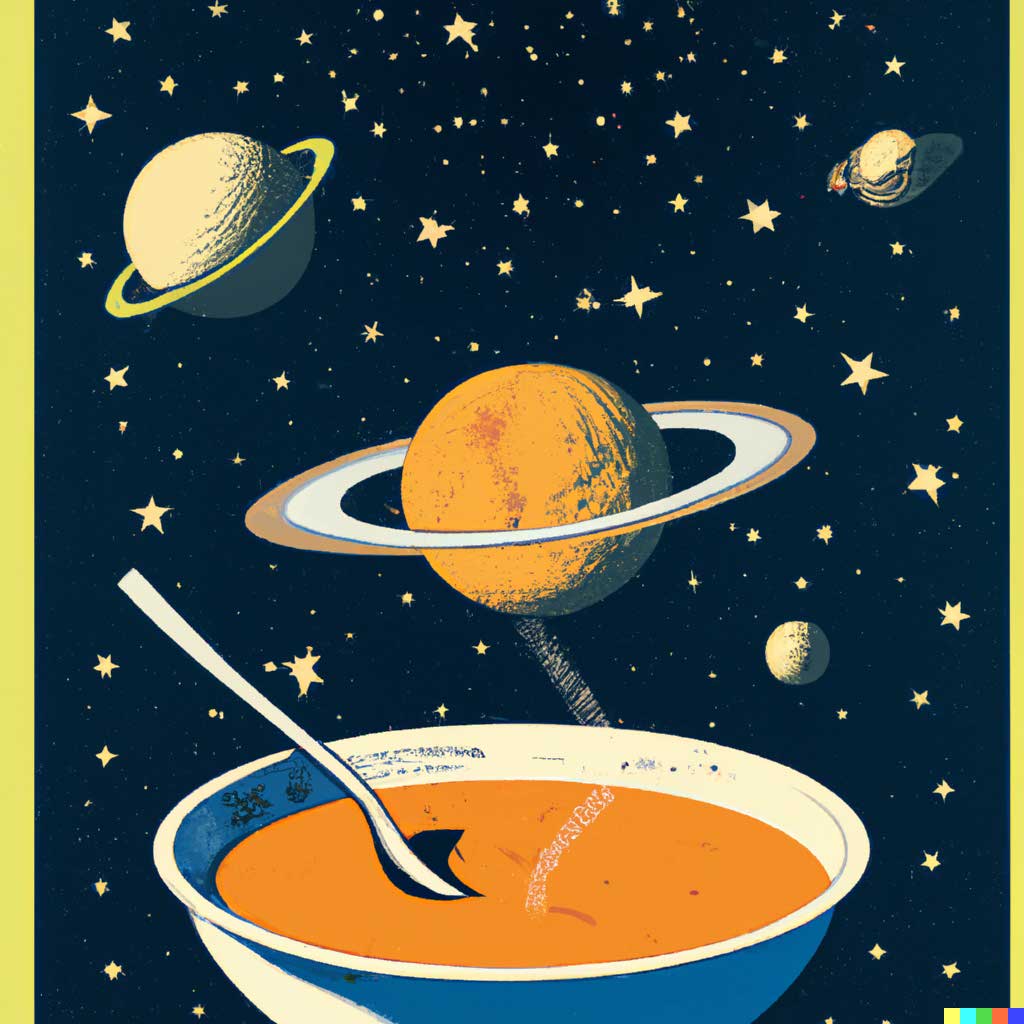

- Text-to-image models generates images based on a given text description.

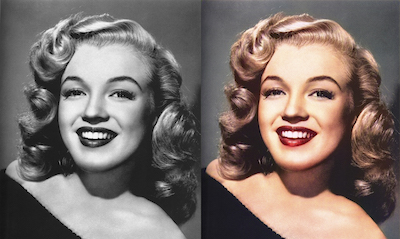

- Style transfer models generates images in a specific artistic style.

Text-to-image models

Text-to-image models generate images based on a text description. These models are trained on a dataset of images and their corresponding textual descriptions, and they learn to generate images that match the description.

One way to generate images using a text-to-image model is to use an encoder-decoder architecture, where the text is converted into a compact code and then used to create an image (this technique is called Variational Autoencoder or VAE). Another way is to use a Generative Adversarial Network, where the text description is used as a guide to create the image.

Some of the most popular text-to-image models are Stable Diffusion, DALL-E 2, and Imagen.

- Generative Adversarial Network

-

Generative Adversarial Network (GAN) can generate new data that is similar to a training dataset.

GAN is composed of two parts: the generator and the discriminator. The generator creates new data that should look similar to the original, and the discriminator tries to figure out if the new data is real or fake. They play a game with each other, where the generator is trying to trick the discriminator, and the discriminator is trying to catch the generator. The generator gets better each time it tricks the discriminator and the discriminator gets better each time it catches the generator.

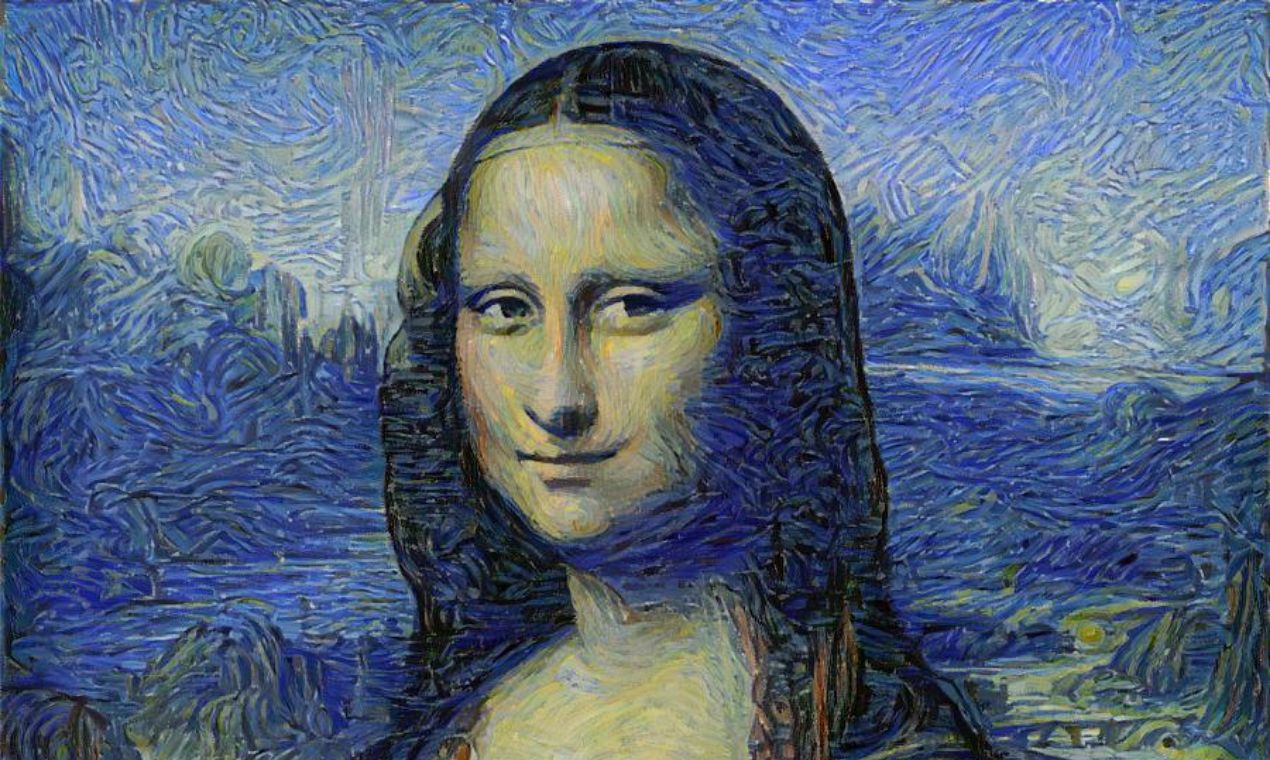

Style transfer models

Style transfer models can generate an image in the style of a particular artist or painting. These models are trained on a dataset of images and their corresponding styles, and they learn to apply the style of one image to the content of another image.

What is DALL-E and DALL-E 2?

DALL-E and DALL-E 2 are deep learning image generation models developed by OpenAI. They are capable of generating high-resolution images from natural language descriptions, such as "oil painting of water lilies with vibrant brushstrokes using a happy color palette". DALL-E 2 can generate both abstract and highly detailed images from text. These models can also be used to edit existing images to match a given description.

DALL-E is groundbreaking vision research from OpenAI that aims to do what technology does best: make it easy for normal people to gain the superpowers of the talented and rich. These models are currently private, but DALL-E Mini is an amazing open-source implementation of the technology that is attempting to replicate the same results.

Hotpot.ai makes it easy to harness the power of AI art generators such as Stable Diffusion and DALL-E 2. Try it today for free.

Generate AI images now